It's Okay to be an AI Luddite

To be a contemporary Luddite is not to be anti-tech so much as pro-human when it comes to how that tech is deployed.

Earlier this month Claire Taylor, founder of The Liberated Writer, wrote a much needed post about the language being used around the adoption of AI in the author community in a post titled, AI Inevitability, Acceptance, and Rape Culture: Where authors are confusing logic with coercion in the AI discussion. I'm grateful to Claire for writing what I think many of us have been feeling about the language around "AI inevitability". Today I want to build upon this by showing why I think it's more than okay for authors and artists to choose to be luddites when it comes to AI and what that might look like.

Claire's post recalls for me a wonderful, in-depth article in the Drift by Zoë Hitzig titled, How Surveillance Became a Love Language, and specifically this quote (my emphasis):

"Technology companies have so thoroughly conditioned us to believe we are powerless when it comes to digital privacy that our attitudes toward privacy more broadly have also been warped."

The changes in technology have come at us so fast and so furious that individually and collectively we've had little time to adapt to a landscape that has been ever shifting.

and further in her essay Zoë writes:

"It’s a strange form of Stockholm syndrome for the surveillance age — we love, and love with, the tools of our captors."

Collectively the tech industry asked us to trust them with the details of our lives and now many, many, many folks and countries feel that they have betrayed that trust including those working in the tech industry.

“The backlash against U.S. tech companies, and the global market dependencies on the American tech stack, is part of a broader recognition that technology is not neutral, and that the companies that produce and shape this ecosystem have social and political interests in addition to their financial interests,” Jathan Sadowski, a senior lecturer at the Emerging Technologies Research Lab at Monash University in Melbourne, told Rest of World. “I don’t think this is just a phase.”

TOXIC TECH

Recognizing that "technology is not neutral" and that the tech companies have demonstrated "social and political interests in addition to their financial interests" in ways which do not align with the common good there are now movements afoot such as Resist and Unsubscribe, or Bits of Freedom which is committed to shifting the power of tech to the people, or the forty organizations writing to European Parliament to "say no to Big Tech mass snooping on our messages!", and in short we arrive at The Great Tech Industry Backlash of 2026 is coming:

"a growing army of disillusioned tech employees, mostly castoffs from a tech world that has forsaken innovation, creative freedom, and long-term vision, trading that for quantifiable productivity, OKR progress checklists, and short-term quarterly profits at any cost. " - Joe Procopio, Inc. Dec. 11, 2025

In short, as journalist and author Kara Swisher recently said to Katie Coric after the recent Washington Post layoffs:

"They have taken, you know, brand tech, and made it toxic"

PERSONAL AGENCY AND ACCOUNTABILITY

In this climate and with such a shoddy reputation, why should individuals, and particularly those in the creative fields, feel obligated to passively "accept" AI? I want to zero in on something Claire wrote that really resonated for me:

"Believing that the future is inevitable and you can’t do a thing to change it is, in most respects, a horrifying state of existence. It turns us into constant victims of circumstance.

It’s an insidious thing, handing over your agency in any capacity. When you forfeit agency with respect to accountability, you forfeit it in every other part of your life." - Claire Taylor, The Liberated Writer (my emphasis)

Rather than being overwhelmed by the AI hype each of us individually can take steps to combat the AI anxiety we my feel.

Howard B. Esbin, PhD suggests that AI anxiety specifically threatens two critical human capacities that require targeted cultivation:

Self-Awareness: The Capacity for Reflective Judgment

What happens when individuals give up their agency and just accepts without question the information an AI system provides?

"When AI systems produce hallucinations—plausible but entirely fabricated information presented with complete confidence—they don't just spread misinformation. They actively train users to doubt their own knowledge and understanding. This is algorithmic gaslighting: AI systems confidently presenting false information in ways that cause users to question their own perception and memory. Over time, this creates learned helplessness, where people become dependent on AI verification for increasingly routine cognitive tasks."

Giving up our agency to AI makes us vulnerable to allowing our own minds to be reprogramed in a dumbed down fashion.

Imagination: The Capacity for "What If?"

Forfeiting our agency to AI risks narrowing the field of options available to us.

"AI anxiety also threatens imagination—not fantasy or daydreaming, but what research identifies as "constructive imagination": the capacity to envision alternative futures, generate novel solutions, and maintain agency in shaping outcomes rather than passively accepting algorithmic recommendations."

This recalls for me Walter Brueggemann's classic work The Prophetic Imagination and the fundamental importance to humanity for there to be individuals who imagine alternatives to the dominate narrative.

ENTER THE LUDDITES

My personal tech motto these days has become: I'd rather be a Luddite than a lemming. Allow me to explain.

In his recent book, Blood in the Machine, Brian Merchant, wrote about the original Luddites. In the New Yorker review titled, Rethinking the Luddites in the Age of A.I. Kyle Chayka wrote:

“Blood in the Machine” suggests that although the forces of mechanization can feel beyond our control, the way society responds to such changes is not.

Just take a look at the "special responsibility" unions have and how they "give workers a voice over how AI affects their jobs". Some are suggesting that AI could cause workers to rise up against the corporations driving them into poverty. There is a lot of earned distrust toward the tech industry broadly and concerns about AI by the public are only growing the more we find out about the potential impact on society and the environment.

One 1812 letter from the Luddites described their mission as fighting against “all Machinery hurtful to Commonality.” That remains a strong standard by which to judge technological gains.

In another article in The New Yorker titled, Revenge of the Luddites, Chayka wrote about a fake tribunal Merchant held (my ephmasis).

Onstage, Merchant leaned forward on the handle of his hammer. “You know, it’s important to point out that the Luddites were not just in a blind rage, smashing everything,” he said. “They were very tactical and very focussed on what was actually causing exploitation.”

To be a contemporary Luddite is not to be anti-tech so much as pro-human when it comes to how that tech is deployed. Does it benefit the few or the many? Individually how we respond helps shapes then answer.

SHAPING THE DIRECTION OF AI

To be a contemporary Luddite is to reclaim our agency from toxic tech, to acknowledge our own accountability respecting the tech we choose to use, and to take steps to protect our imagination in the age of AI and surveillance.

“The challenge, then, isn’t just understanding where A.I. is headed—it’s shaping its direction before the choices narrow.” - John Cassidy, How to Survive the A.I. Revolution, The New Yorker

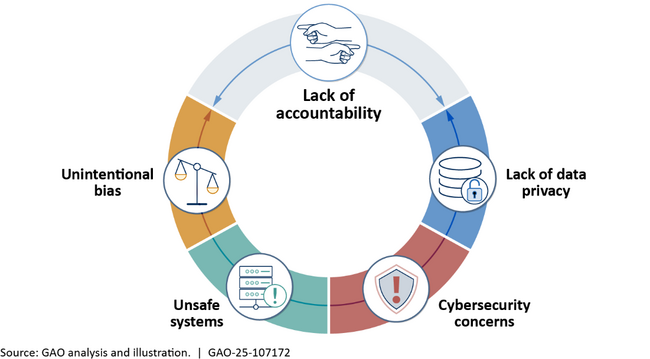

Here it helps to take a look at all the concerns around AI who are seeking to shape how AI is developed.

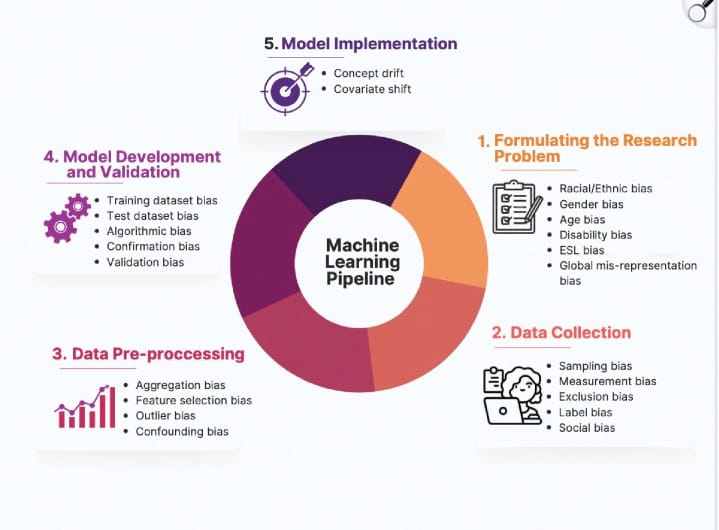

SOURCES OF BIAS IN AI

Chart from: Bias in artificial intelligence algorithms and recommendations for mitigation

AREAS OF CONCERN AND DANGER IN THE DEPLOYMENT OF AI

In January of this year Mike Thomas enumerated eighteen risks and dangers of AT including:

- Lack of AI Transparency and Explainability

- Job Losses

- Social Manipulation through AI

- Social Surveillance

- Lack of data privacy using AI tools

- Socioeconomic Inequality

- Weakening Ethics

- Autonomous weapons powered by AI

- Financial crises brought about by AI algorithms

- Political instability

- Loss of human influence

- Increased criminal activity

- Child safety concerns

- Mental deterioration and Psychological Harm

- Uncontrollable and self-aware AI

- Intellectual Property Infringement

- Nonconsenual Image Generation

- Environmental Deterioration

Are we supposed to just forfeit our collective and individual agency and our accountability and let AI companies run wild in each of these areas? Ideas such as not regulating AI for a decade would be gross collective negligence at the very least. We've already seen what happened with social media breaking the social contract and pointing to 1996 legislation known as Section 230 to shrug all responsibility for "user generated content". Being a contemporary Luddite to me means beginning to hold tech companies responsible for the harms their products cause in society. We've held companies responsible before and we are capable of doing it now.

Governments "are stepping in with new laws, frameworks and oversight bodies to mitigate its growing risks" and references the example of the EU Artificial Intelligence Act.

A FAILURE OF RESPONSIBILITY

We have been here before and we have seen the results of shrugging off our own agency and accountability and trusting tech companies to do the right thing for our collective good.

In my more than 28 years in technology, I have watched the same story repeat itself with numbing consistency. Every major technological breakthrough is followed, almost immediately, by someone figuring out how to weaponize it. Too often, that harm takes the form of sexual violence and exploitation.

We saw it in the early days of AOL chat rooms, where people who intended harm operated faster than understaffed and undertrained moderators could respond. We saw it again when file-sharing platforms like The Pirate Bay enabled the mass distribution of illegal content with little friction. Later came the surge of nonconsensual intimate images (NCII), paired with cryptocurrency-enabled money laundering that now helps perpetrators evade accountability. Internet culture even has a shorthand for this inevitability: Rule 34—if something exists, it will be exploited.

That pattern is not a failure of imagination. It is a failure of responsibility. -Bill Bondurant, Chief Technology Officer, RAINN writes in AI Didn’t Invent Sexual Abuse — It Just Made It Easier

Individually and collectively we cannot shrug the responsibility for the tech we use or permit to be used in our societies. We cannot continue to follow the lead of irresponsible parties who introduce "machinery harmful to the commonality" and then take zero responsibility for that harm.

WHO BENEFITS

It hasn't always been the few who benefit from innovation.

“Our innovation ecosystem in the 20th century was about making opportunities for human flourishing more accessible,” says Shannon Vallor, a technology philosopher at the Edinburgh Futures Institute and author of The AI Mirror, a book about reclaiming human agency from algorithms. “Now, we have an era of innovation where the greatest opportunities the technology creates are for those already enjoying a disproportionate share of strengths and resources.”

The above quote comes from Reece Rogers article The AI Backlash Keeps Growing Stronger.

We, individually and collectively, still have an opportunity to determine how AI is deployed and who it benefits. This isn't the time for passivity.

"WE DON'T NEED TO PASSIVELY ACCEPT OUR FATE"

At an individual level the sudden deluge of AI everywhere makes it seem as if the pushed narrative of AI is the only one available. Is it?

“We have to always remind ourselves that the direction of technology is a choice, right? We can use AI to build a surveillance economy that squeezes every drop of value out of a worker, or we can use it to build an era of shared prosperity. We know if technology were designed and deployed and governed by the people doing the work, AI wouldn’t be such a threat.” - Sarita Gupta, the Ford Foundation’s vice-president of US programs and co-author of The Future We Need: Organizing for a Better Democracy in the Twenty-First Century (my emphasis)

Authors have the opportunity to inform and shape the narrative around AI

At RightsCon 25, a conference focused on "human rights in the digital age" participants in a session called A toolkit on surveillance tech in fiction were invited to collaboratively to join the review of a draft toolkit for creators of fictional worlds and narratives to support more just depictions of surveillance technology, and in promotion of a future where privacy is a universally respected human right.

Discussions such as those at RightsCon about alternative narratives around AI are occurring and writers, rather than relinquishing their agency, can seek these out and help craft alternative narratives such as we see in solarpunk or however we choose to envision alternatives.

We can certainly have more fun such as Nathan J. Robinson did with Echoland: A Comprehensive Guide to A Nonexistent Place.

"Echoland is both a warning and an exhortation. It warns that without a major course correction, we could be heading for a global catastrophe of an unthinkable magnitude. But it exhorts us to take control of our destiny and bring about a future of peace, ecological harmony, and abundance for all."- Nathan J. Robinson

So let's close with some sage advice from tech legend Stewart Brand:

“If you like some scenarios better than others, you can be aware of the ones you don’t like and look for signs of them, and also look at signs of the ones you want to have come to pass, and lean differentially toward them. That’s how you negotiate your way into a future you were glad of. It’s done incrementally by, among other things, lots of individuals and some institutions, and that’s how we grapple our way, muddle our way forward.”

From Steve Rose's article in The Guardian